Release 0.10.0 (November 29, 2018)¶

Highlights¶

- New user interface. Improved look-and-feel, performance, and modernized color scheme. Simplified setup guide for creating new feeds.

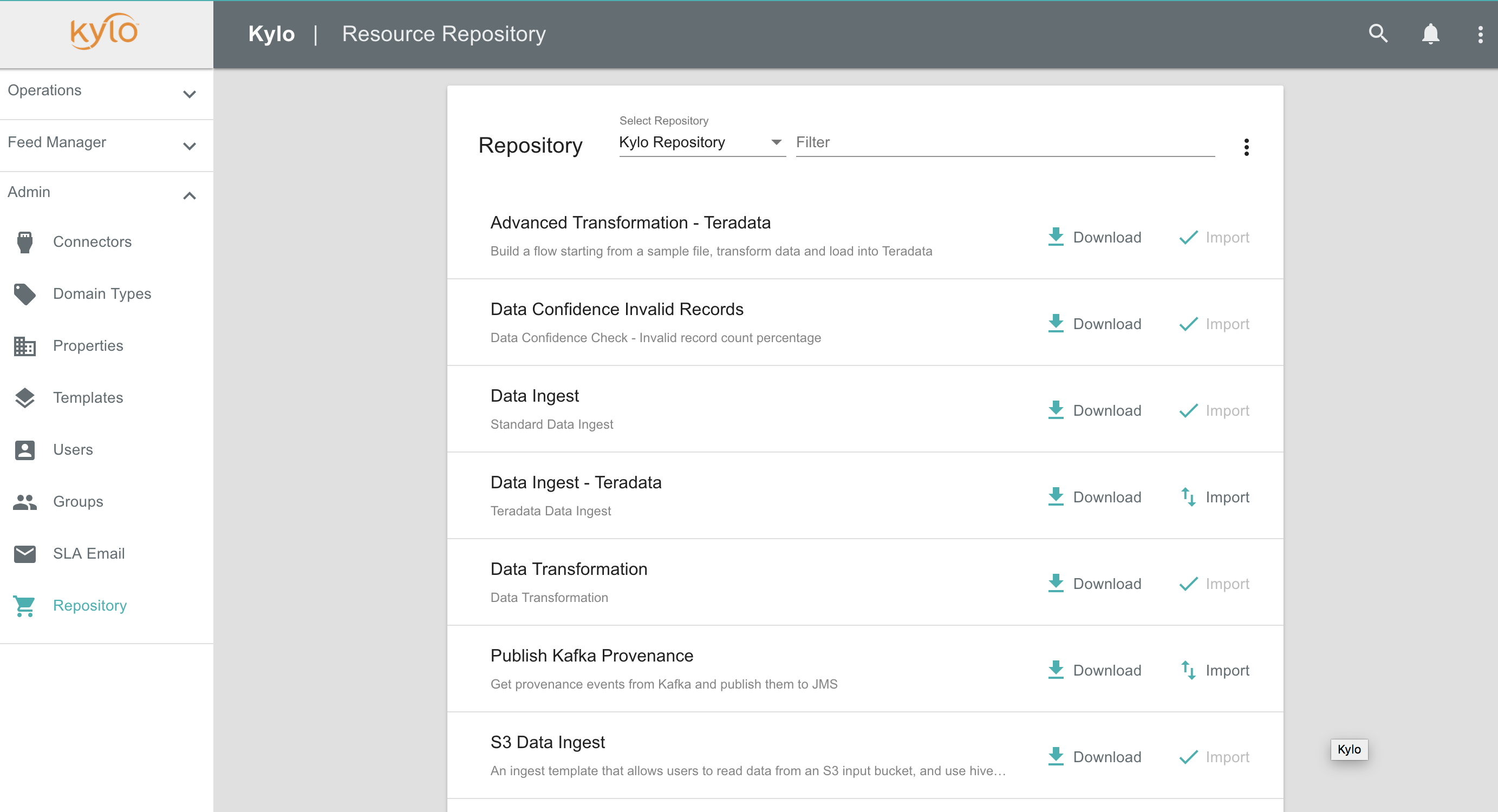

- Template Manager New template management that enables admins to quickly update when new versions of templates become available or publish templates for the enterprise.

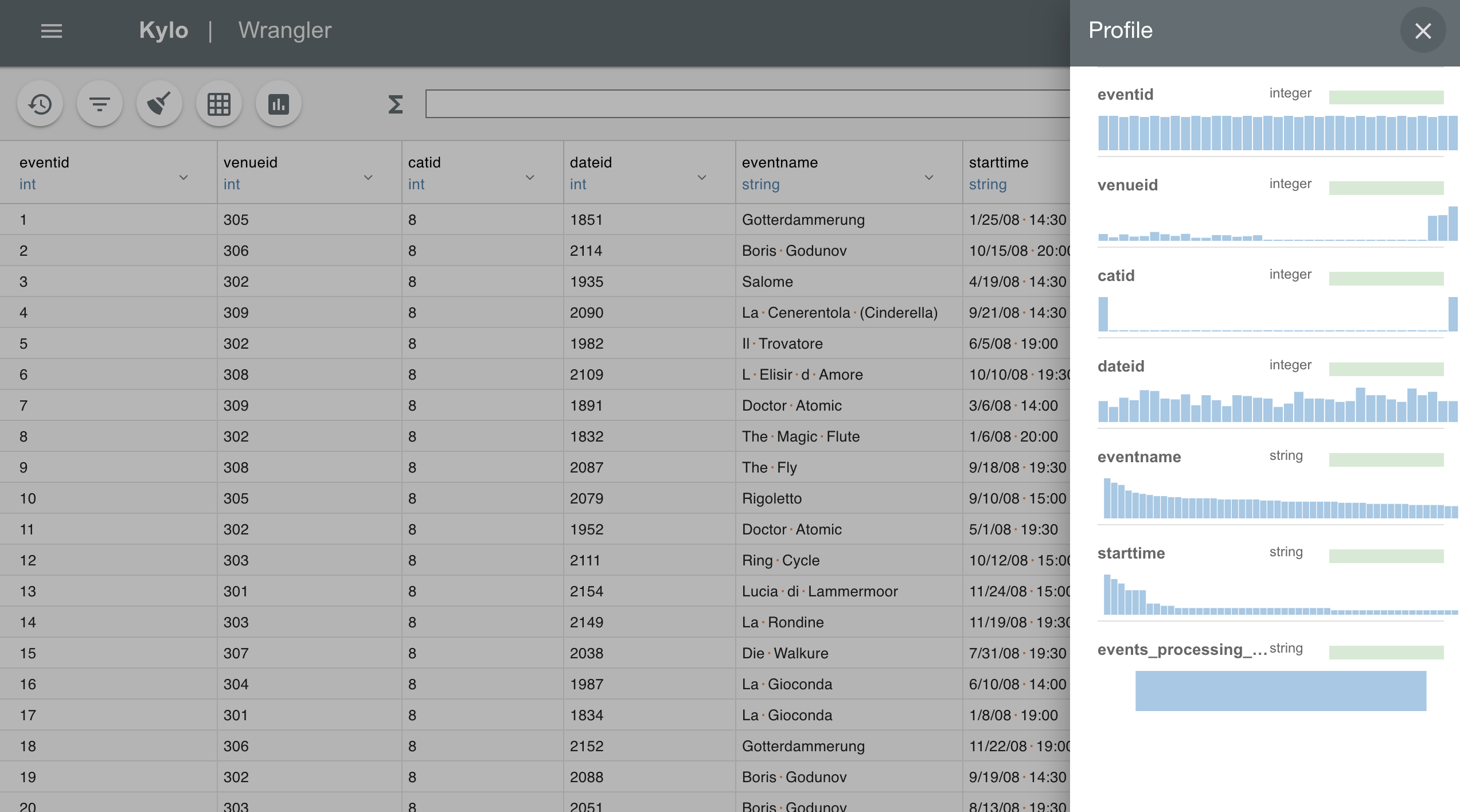

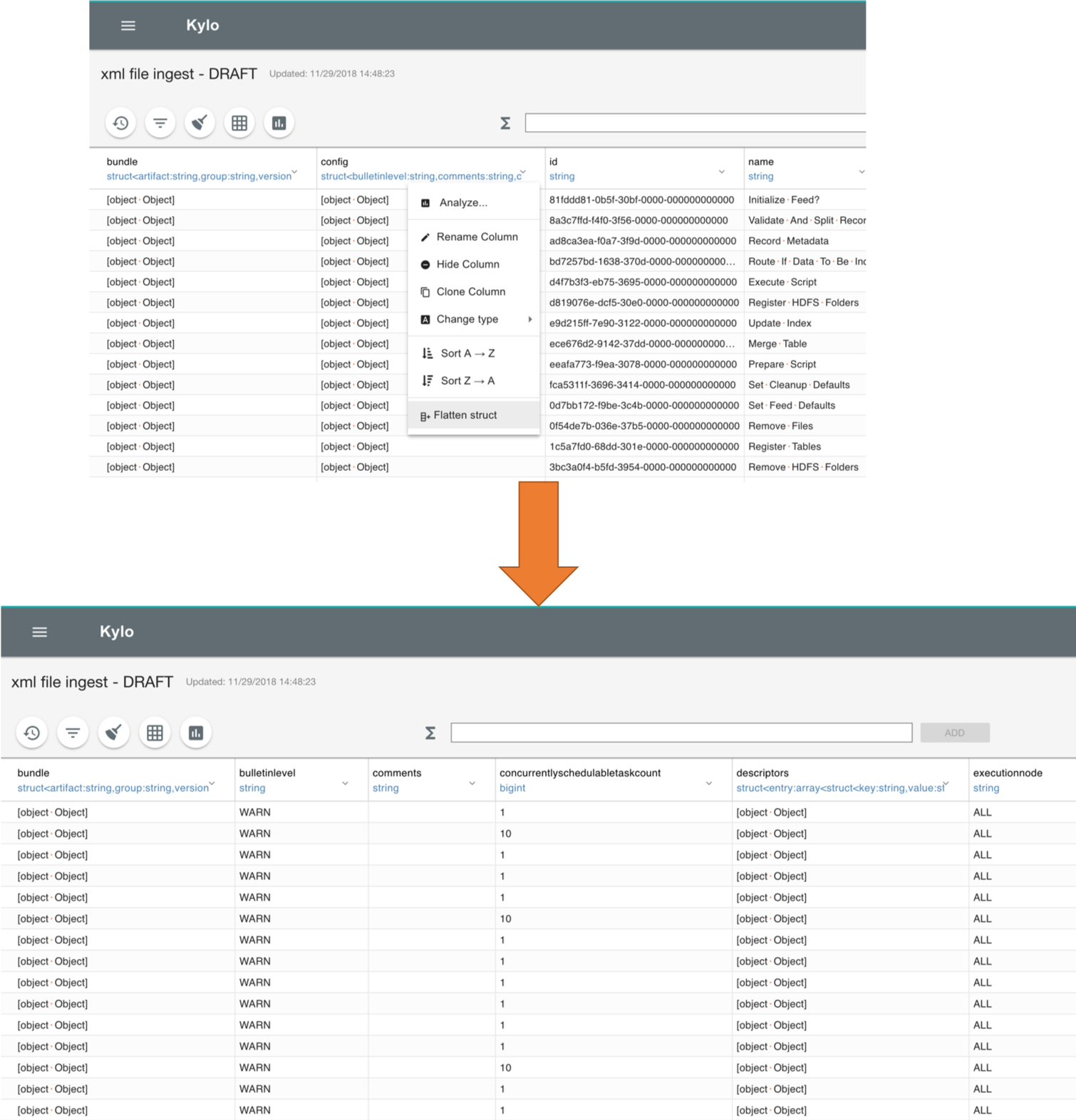

- Wrangler improvements. Many new features have been added to the wrangler to make data scientists more productive. Features includes: quick data clean; improved schema manipulation; new data transformations, including imputing new values; column statistics view.

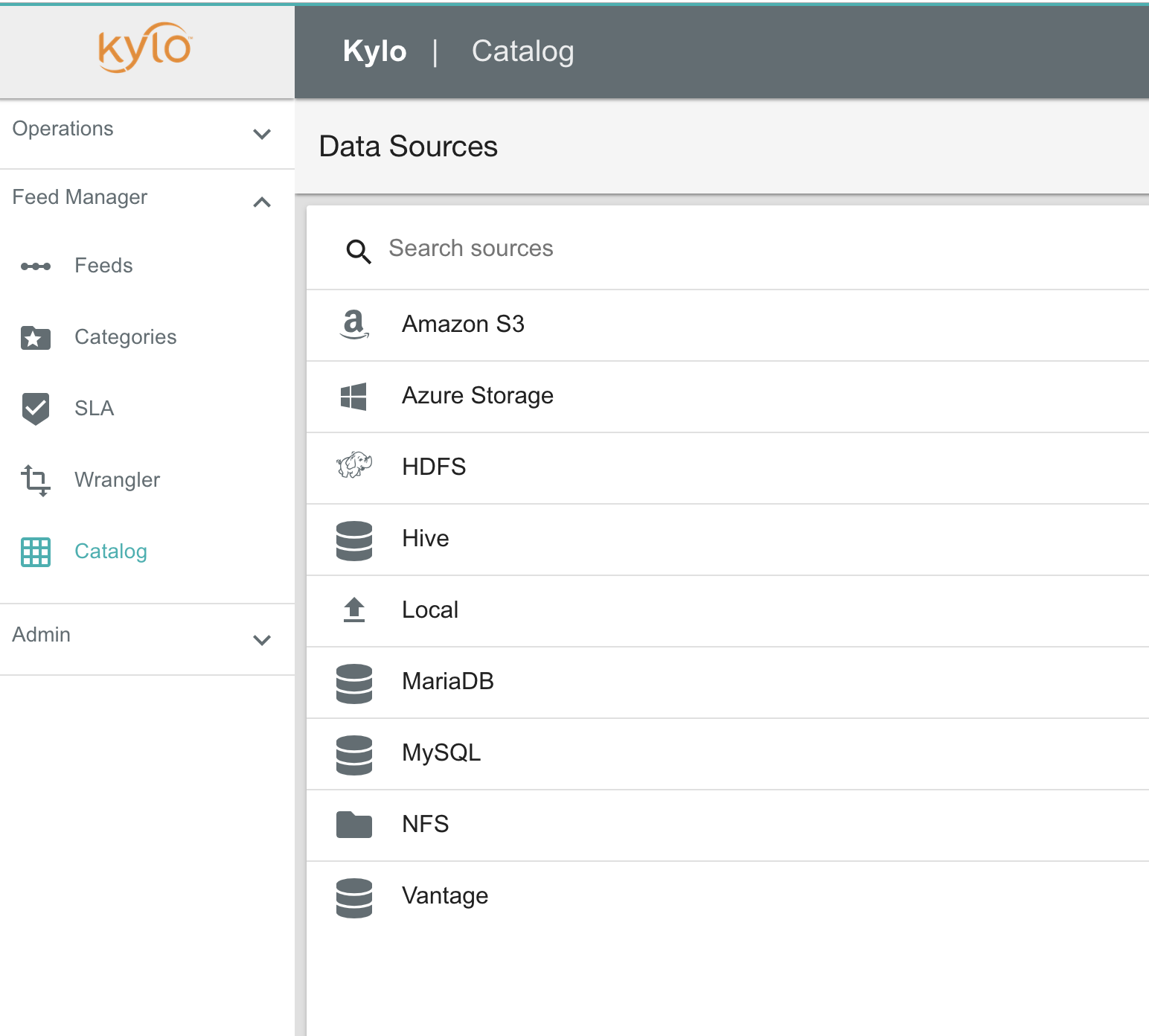

- Data Catalog. New virtual catalog to access remote datasets to wrangle, preview, and feed setup. Kylo 0.10 includes the following connectors: Amazon S3, Azure, HDFS, Hive, JDBC, Local Files, NAS Filesystem

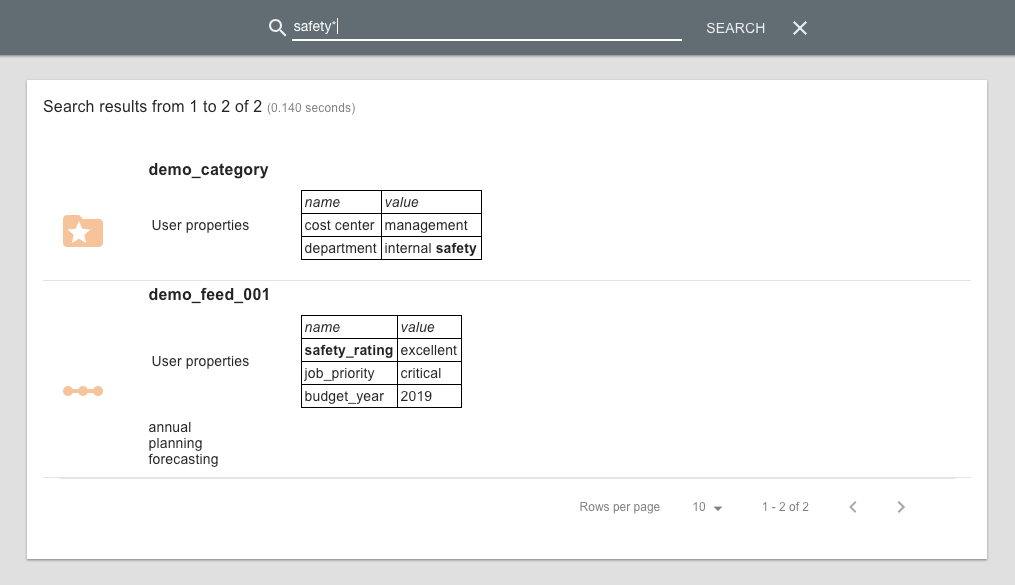

- Search custom properties. Any custom properties defined for feeds and categories are indexed and available via the global metadata search feature.

Important Changes¶

Kylo UI Plugin Changes¶

- Kylo UI plugins 0.9.x or earlier will not work with 0.10.0. If you had custom kylo ui code please refer to this doc to migrate your code to a 0.10.0 compatible plugin: Kylo UI Plugin Upgrade

- Kylo UI custom feed stepper plugin's are not supported. Do not upgrade if you need this functionality.

Catalog Changes¶

- The Catalog page used to allow you to query and preview data. This has been removed. You will now need to go to the wrangler to preview catalog data sets

Upgrade Instructions from v0.9.1¶

Note

Before getting started we suggest backing up your Kylo database.

- Backup any custom Kylo plugins

When Kylo is uninstalled it will backup configuration files, but not the /plugin jar files. If you have any custom plugins in either kylo-services/plugin or kylo-ui/plugin then you will want to manually back them up to a different location.

Note

Kylo ui plugins 0.9.x or earlier will not work with 0.10.0. If you had custom kylo ui code please refer to this doc to migrate your code to a 0.10.0 compatible plugin: Kylo UI Plugin Upgrade

- Uninstall Kylo:

/opt/kylo/remove-kylo.sh

- Install the new RPM:

rpm –ivh <RPM_FILE>

Restore previous application.properties files. If you have customized the the application.properties, copy the backup from the 0.9.1 install.

4.1 Find the /bkup-config/TIMESTAMP/kylo-services/application.properties file

- Kylo will backup the application.properties file to the following location, /opt/kylo/bkup-config/YYYY_MM_DD_HH_MM_millis/kylo-services/application.properties, replacing the “YYYY_MM_DD_HH_MM_millis” with a valid time:

4.2 Copy the backup file over to the /opt/kylo/kylo-services/conf folder

### move the application.properties shipped with the .rpm to a backup file mv /opt/kylo/kylo-services/conf/application.properties /opt/kylo/kylo-services/conf/application.properties.0_10_0_template ### copy the backup properties (Replace the YYYY_MM_DD_HH_MM_millis with the valid timestamp) cp /opt/kylo/bkup-config/YYYY_MM_DD_HH_MM_millis/kylo-services/application.properties /opt/kylo/kylo-services/conf

4.3 If you copied the backup version of application.properties in step 4.2 you will need to make a couple of other changes based on the 0.10.0 version of the properties file

A new spring profile of ‘kylo-shell’ is needed. Below is an example

vi /opt/kylo/kylo-services/conf/application.properties ## add in the 'kylo-shell' profile (example below) spring.profiles.include=native,nifi-v1.2,auth-kylo,auth-file,search-esr,jms-activemq,auth-spark,kylo-shell

Add the following new properties below:

#default location where Kylo looks for templates. This is a read-only location and Kylo UI won't be able to publish to this location. #Additional repositories can be setup using config/repositories.json where templates can be published kylo.template.repository.default=/opt/kylo/setup/data/templates/nifi-1.0 kylo.install.template.notification=true

4.4 Repeat previous copy step (4.2 above) for other relevant backup files to the /opt/kylo/kylo-services/conf folder. Some examples of files:

- spark.properties

- ambari.properties

- elasticsearch-rest.properties

- log4j.properties

- sla.email.properties

NOTE: Be careful not to overwrite configuration files used exclusively by Kylo

4.5 Copy the /bkup-config/TIMESTAMP/kylo-ui/application.properties file to /opt/kylo/kylo-ui/conf

Ensure the new property ‘zuul.routes.api.sensitiveHeaders’ exists. Example below

vi /opt/kylo/kylo-ui/conf/application.properties zuul.prefix=/proxy zuul.routes.api.path=/** zuul.routes.api.url=http://localhost:8420/api ## add this line below for 0.10.0 zuul.routes.api.sensitiveHeaders

The multipart.maxFileSize and multipart.maxRequestSize properties have changed. Update these 2 properties to be the following:

### allow large file uploads spring.http.multipart.maxFileSize=100MB spring.http.multipart.maxRequestSize=-1

4.6 Ensure the property

security.jwt.keyin both kylo-services and kylo-ui application.properties file match. They property below needs to match in both of these files:/opt/kylo/kylo-ui/conf/application.properties

/opt/kylo/kylo-services/conf/application.properties

security.jwt.key=

4.7 (If using Elasticsearch for search) Create/Update Kylo Indexes

Execute a script to create/update kylo indexes. If these already exist, Elasticsearch will report an

index_already_exists_exception. It is safe to ignore this and continue. Change the host and port if necessary./opt/kylo/bin/create-kylo-indexes-es.sh localhost 9200 1 1

Update NiFi

Stop NiFi

service nifi stop

Run the following shell script to copy over the new NiFi nars/jars to get new changes to NiFi processors and services.

/opt/kylo/setup/nifi/update-nars-jars.sh <NIFI_HOME> <KYLO_SETUP_FOLDER> <NIFI_LINUX_USER> <NIFI_LINUX_GROUP> Example: /opt/kylo/setup/nifi/update-nars-jars.sh /opt/nifi /opt/kylo/setup nifi users

Configure NiFi with Kylo’s shared Encryption Key

- Copy the Kylo encryption key file to the NiFi extention config directory

cp /opt/kylo/encrypt.key /opt/nifi/ext-config

- Change the ownership and permissions of the key file to ensure only nifi can read it

chown nifi /opt/nifi/ext-config/encrypt.key chmod 400 /opt/nifi/ext-config/encrypt.key- Edit the

/opt/nifi/current/bin/nifi-env.shfile and add the ENCRYPT_KEY variable with the key value

export ENCRYPT_KEY="$(< /opt/nifi/ext-config/encrypt.key)"

Start NiFi

service nifi start

Install XML support if not using Hortonworks.

Start Kylo to begin the upgrade

kylo-service startNote

NiFi must be started and available during the Kylo upgrade process.

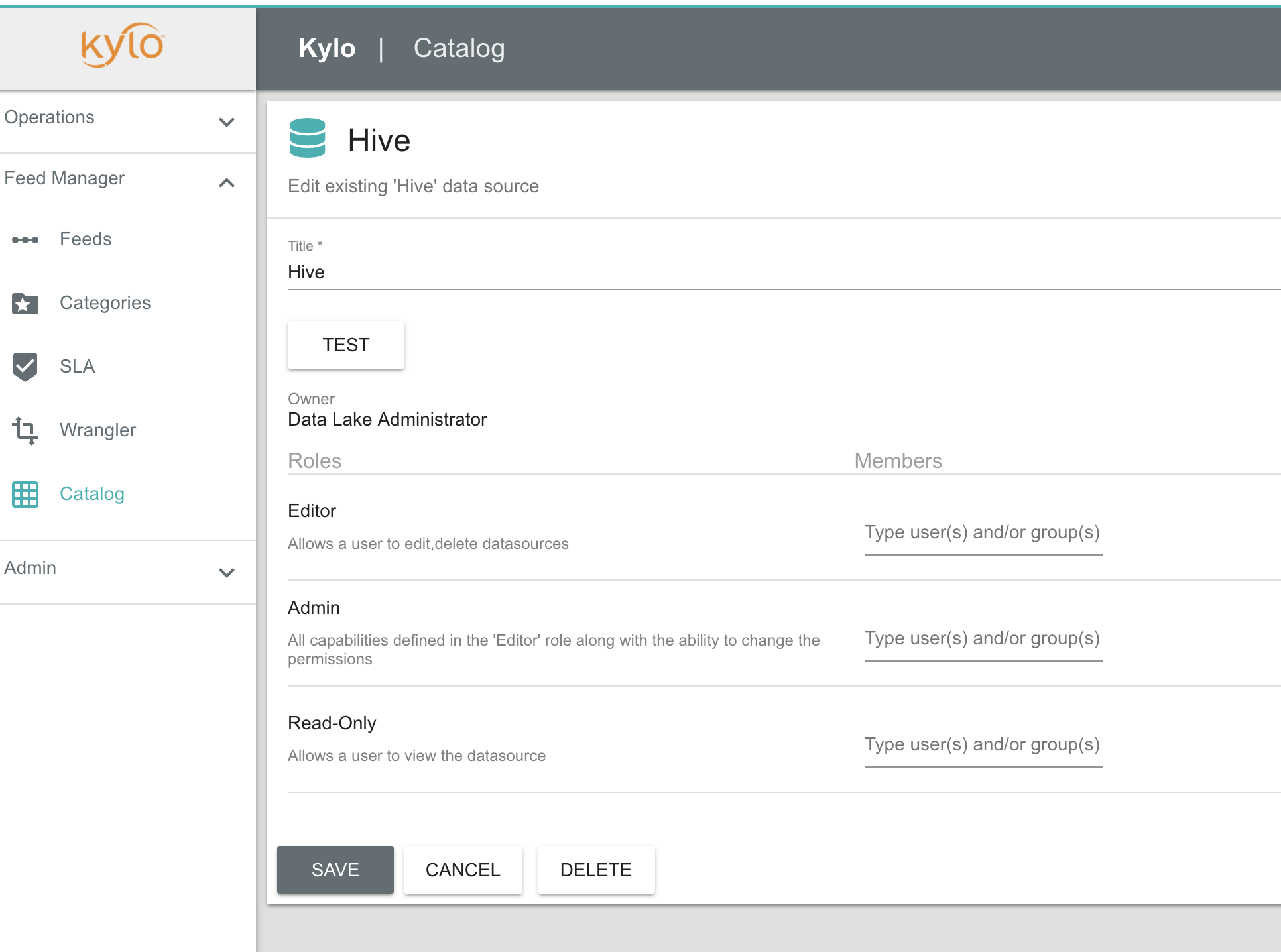

If entity access is enabled, the Hive data source will no longer be accessible to all users by default. To grant permissions to Hive go to the Catalog page and click the pencil icon to the left of the Hive data source. This page will provide options for granting access to Hive or granting permissions to edit the data source details.

Note

If, after the upgrade, you experience any UI issues in your browser then you may need to empty your browser’s cache.

Mandatory Template Updates¶

Once Kylo is running the following templates need to to be updated.

- Advanced Ingest

- Data Ingest

- Data Transformation

- S3 Data Ingest (S3 Data Ingest documentation)

- XML Ingest

Use the new Repository feature within Kylo to import the latest templates.

Highlight Details¶

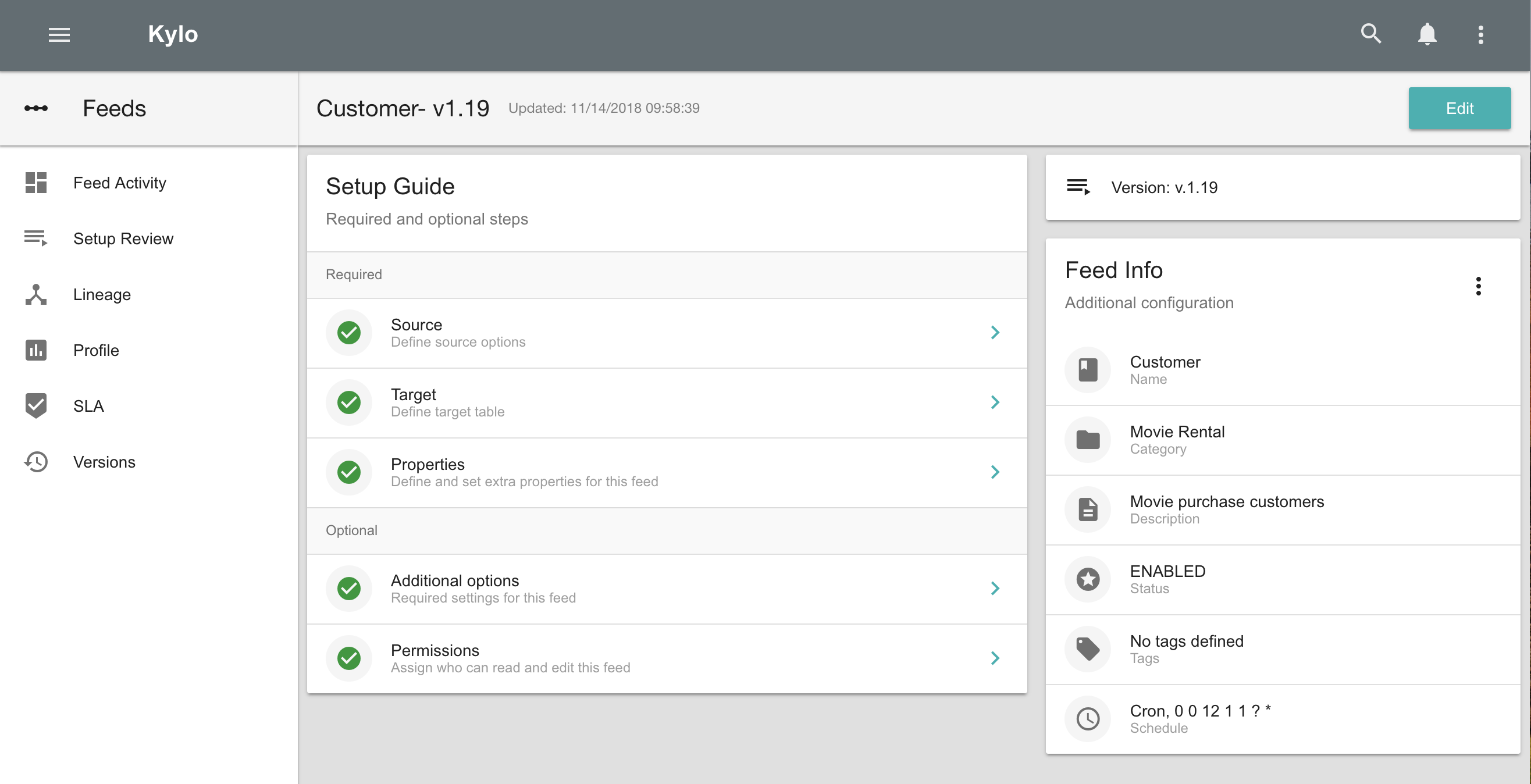

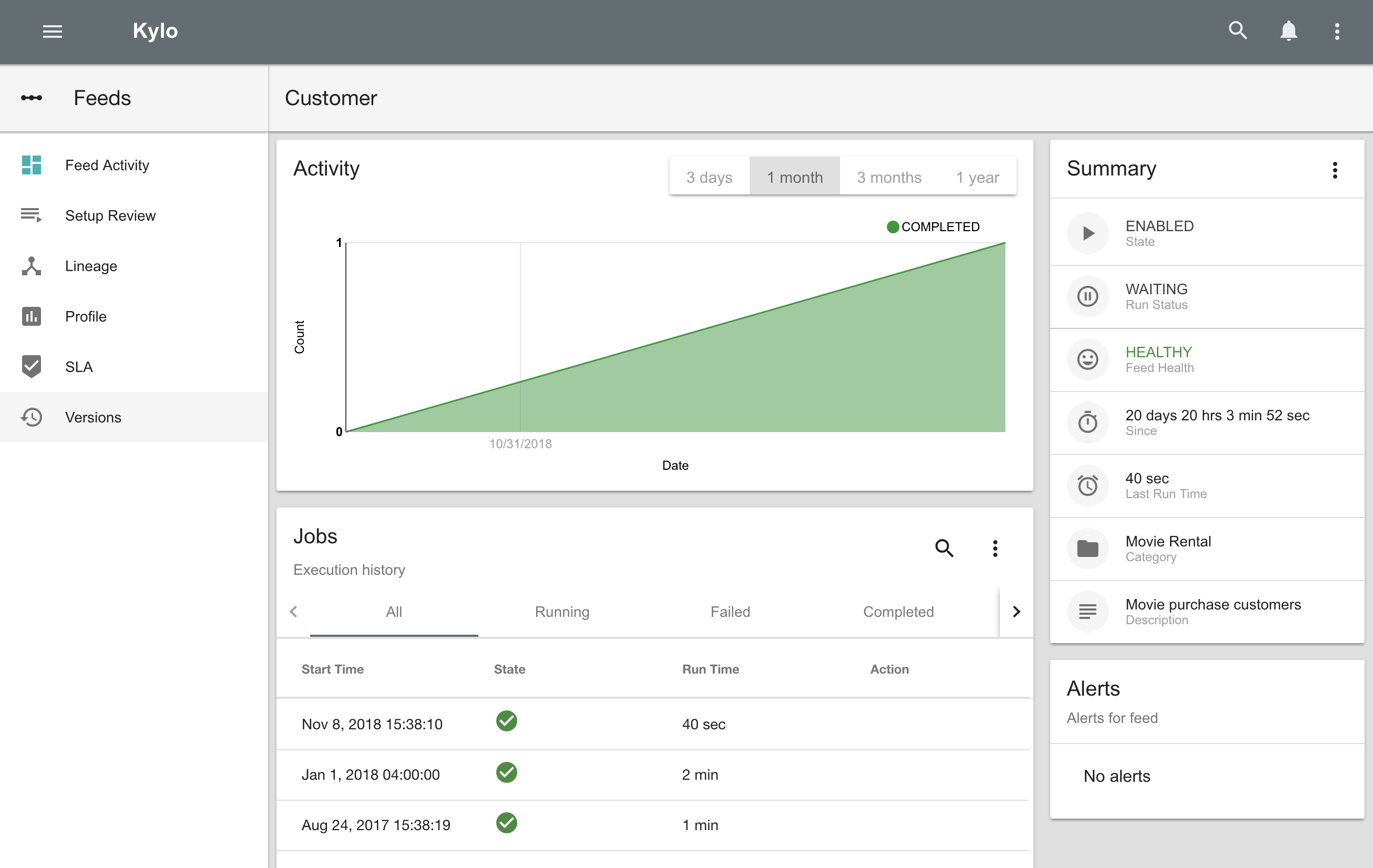

New User Interface¶

Kylo now has a new user interface for creating and editing feeds.

Editing feeds is separate from deploying to NiFi. This allows you to edit and save your feed state and when ready deploy it.

Centralized feed information. The feed activity view of the running feed jobs is now integrated with the feed setup.

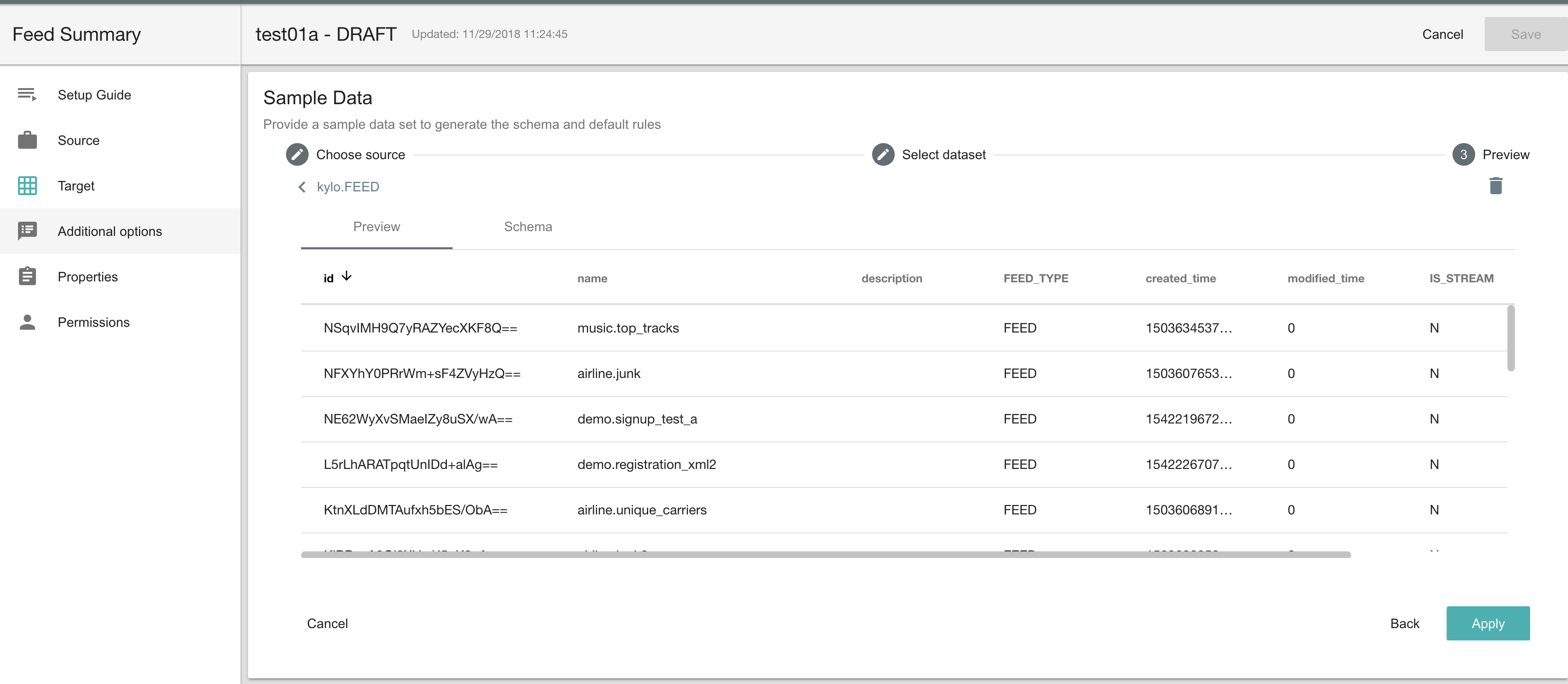

Catalog¶

Kylo allows you to create and browse various catalog sources. Kylo ships with the following datasource connectors: Amazon S3, Azure, HDFS, Hive, JDBC, Local Files

During feed creation and data wrangling you can browse the catalog to preview and select specific sources to work with:

Note: Kylo Datasources have been upgraded to a new Catalog feature. All legacy JDBC and Hive datasources will be automatically converted to catalog data source entries.

Wrangler¶

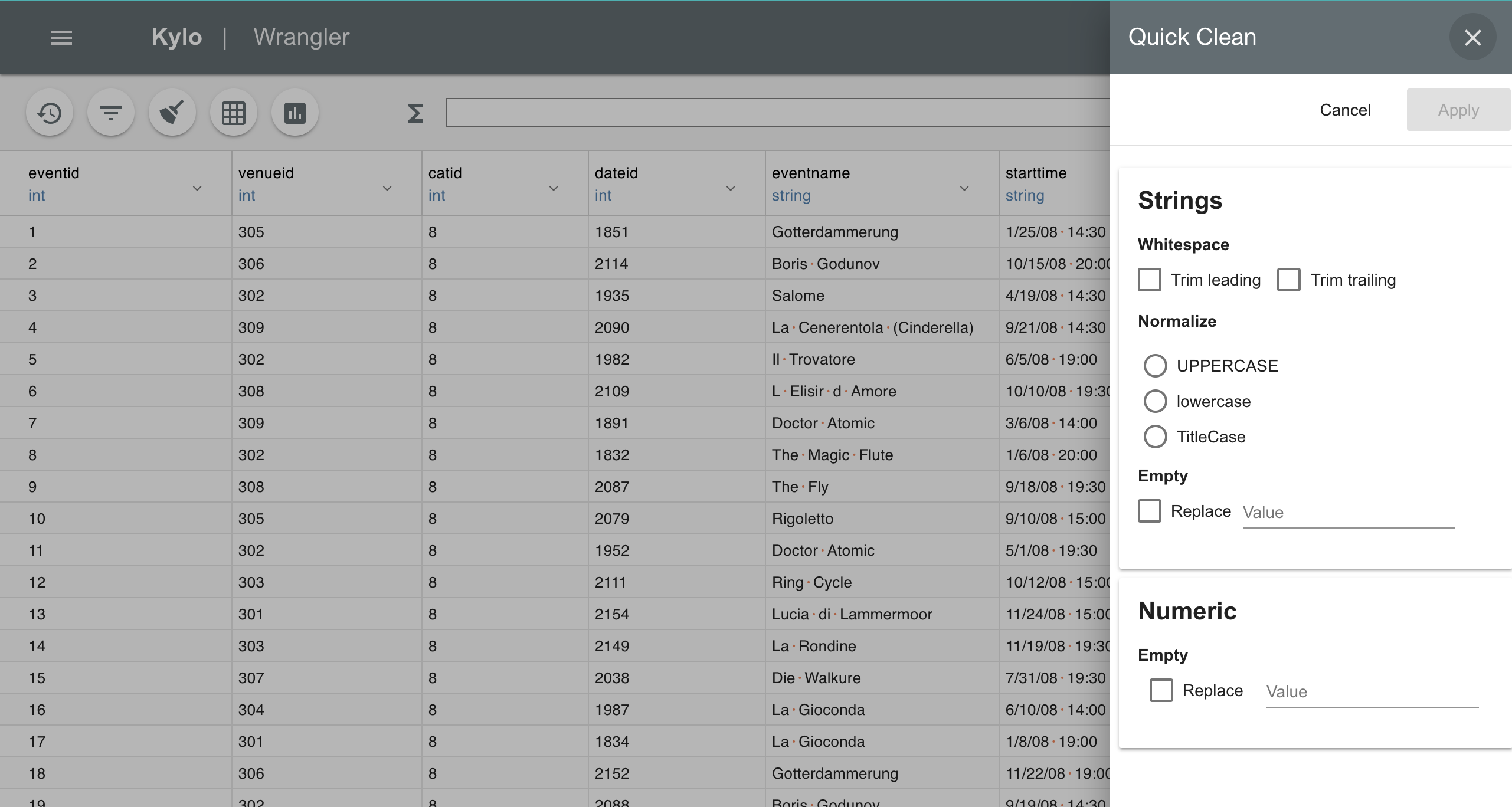

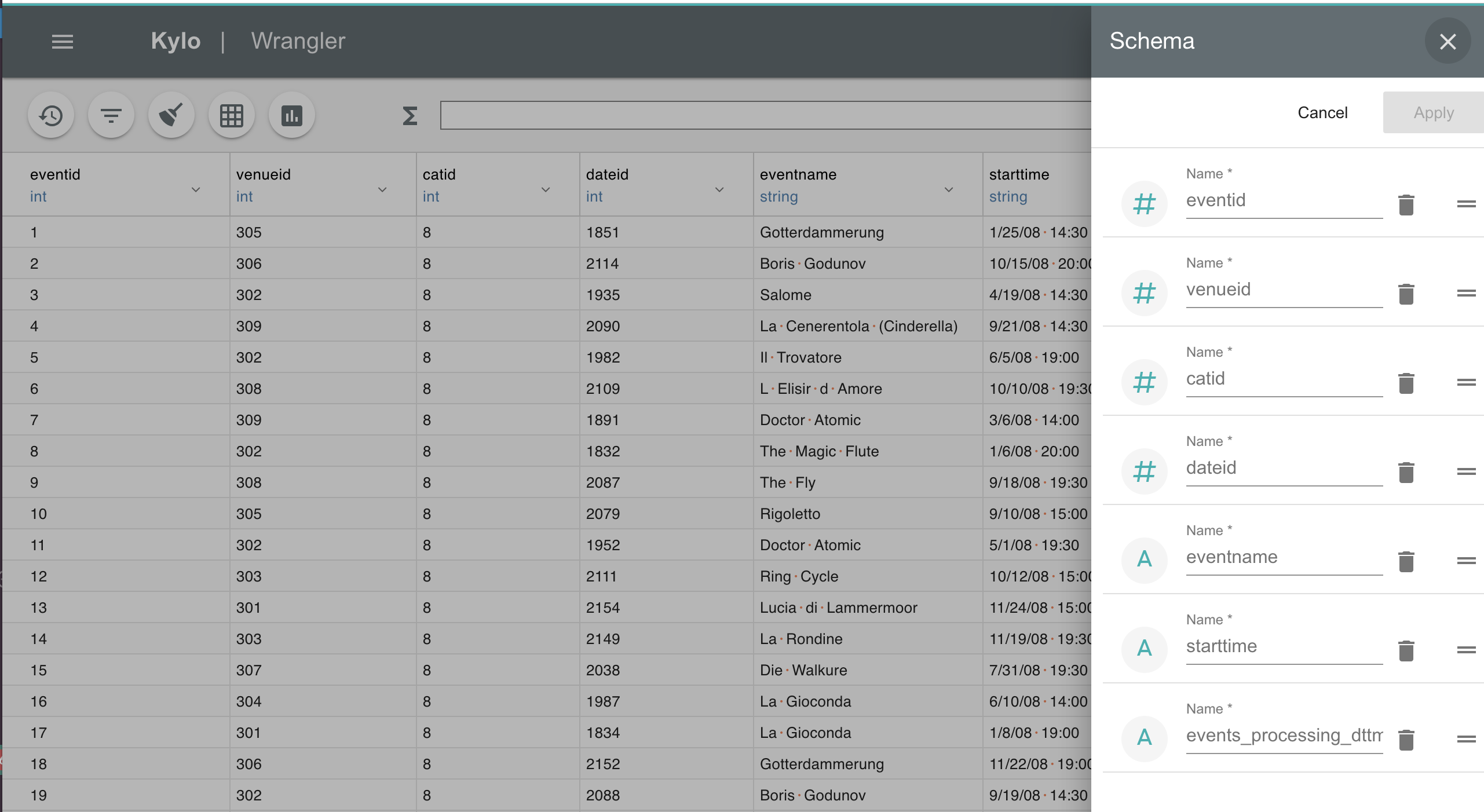

The Wrangler has been upgraded with many new features.

- New quick clean feature allows you to modify the entire dataset